I'm announcing my latest open source project: a WebRTC chat room with asynchronous video support that I'm calling BareRTC. It is very much styled after the classic old-school Flash-based chat rooms that were popular in the early 2000's.

I have a demo of it available here: https://chat.kirsle.net/. I can't guarantee I'll be lurking in that room at any given time but you can test it out yourself on a couple of devices or send the link to some friends and see how it works.

Its primary features are:

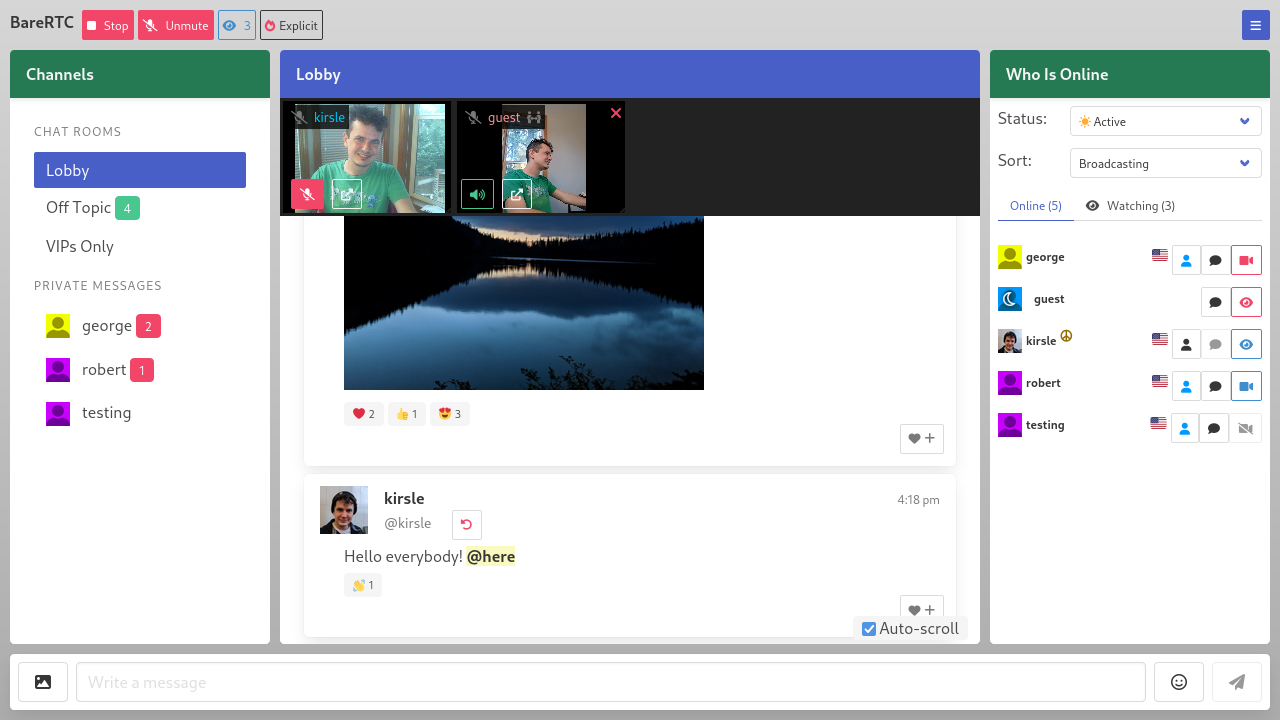

The back-end is written in Go and it should easily install onto any server. With WebRTC, the webcam streams are peer-to-peer so you don't need a server in the middle to relay all that video bandwidth (which could be expensive!). Here is a screenshot from the source repo:

In this blog post I'll talk a bit about the technicals and difficulties I ran into getting this app to work.

One of my side projects is nonshy, a social networking site for nudists and exhibitionists. I wanted a chat room for it and I wanted it to work as described above: where some users can be on video but the room isn't all about video and is primarily a text chat room, as not everybody has a camera or the will to be broadcasting on it at all times.

I really expected that a chat room like this should have already existed out there as a free and open source project that I could plug in to my site. There were so many Flash-based rooms just like this in the early 2000's, and WebRTC is a standard web technology now, there are lots of open source apps and libraries that use WebRTC, but none of them worked like these classic old chat rooms did. Most WebRTC apps are in the style of Zoom or Jitsi, where it's expected that all users will be on video.

I didn't want to build my own chat room, but as there was nothing suitable for me to use I had to do it myself. It was a fun learning process to play with WebRTC for the first time.

My chat app now fills the void of such a thing not existing. I didn't want to build it specifically for my social networking site, so it's a stand-alone app that plugs in to any existing userbase by letting your existing site sign a JWT token to authenticate users in. With the JWT you can also convey a profile picture image, profile URL and admin/operator status to the user as they enter the chat.

I named it "BareRTC" for the punny word play: I was "grumpy like a bear" that I had to program this damn thing myself and for the play on words that the itch I was scratching was for a nudist website in particular.

WebRTC is a web standard that allows two browsers to connect to each other (peer-to-peer, ideally) and transmit arbitrary data between them -- usually, video and audio data. Most modern video apps including Zoom, Jitsi Meet, Discord and others are using WebRTC to get video chat to work in a web browser.

The great thing about WebRTC is that it's peer-to-peer so you don't need a server to relay video frames back and forth between users -- saving you a lot of money in bandwidth costs. WebRTC usually works even if both sides of the connection are behind firewalls (such as most home users with a WiFi router that uses NAT). In case neither side can connect to the other, "enterprisey" WebRTC applications will fall back on using a server in the middle to relay video frames back and forth (called a TURN server). My app does not support TURN servers yet, but peer-to-peer video usually works in "most" cases.

These are the things I learned along the way, as this is my very first WebRTC project.

First, the two web browsers need a way to negotiate how they'll connect to one another and what features they will support (data channels, video or audio streams, and which codecs to use with those, and so on). To handle this initial negotiation process, you need a signaling server which is just any server that can echo messages back and forth between the two clients.

My chat room (before adding video support) already had a signaling server: I am using WebSockets to handle the server side of the chat protocol. The web page front-end connects to the WebSockets server to log in with a username and send and receive chat messages.

So I have two users "alice" and "bob" who want to connect peer-to-peer and share video. I programmed my WebSocket server to also be the signaling server for WebRTC: Alice sends a message to my server meant for Bob and my server forwards it along, and the two of them share "ICE candidates" and "SDP messages" back and forth which is how they negotiate how they'll connect to each other (ICE) and what features they will support (SDP). This is the easy part of WebRTC: your back-end signaling server just gives them a method to relay these to each other and then the two browsers have everything they need to (hopefully) establish a peer-to-peer connection directly between themselves.

The part I was getting stuck on the most was that my chat room's video feature is asynchronous: one user is broadcasting a video, and the one who wants to tune in to that might not be broadcasting their own. I could see the ICE and SDP messages being sent between the two users but then nothing would happen: the receiving party was not getting the video streams sent by the caster.

But if the receiver was also casting their own video, the two could connect find and see each other's video!

I found out this is because in the SDP negotiation process, the one connecting (the "offerer") negotiates what features they expect to share, and if they are not sharing their video, they don't request video support; and so the "answerer", even though they added their video streams to the connection, their video is not actually delivered to the offerer.

The fix was that when you called createOffer() you had to say {offerToReceiveVideo: true, offerToReceiveAudio: true} and then all is well.

Update (June 21 2025): It was slightly more complicated for Safari browsers, which don't support the legacy WebRTC protocol. I have posted an update here that goes into how I fixed it for Safari, so definitely check that out!

A crucial part to my success building this app was this barebones WebRTC video chat demo.

It's only 119 lines of basic JavaScript code that quickly gets two browsers into a video call with each other. It uses a service called ScaleDrone for the signaling server (simple channel to allow the ICE/SDP messages to relay between the two browsers). I do not use ScaleDrone in my app but I could see how the JavaScript used it and have my own WebSocket server do the same for my needs.

Some of the quirks that I had to deal with comparing my app to this example were:

My chat room app is released under the GNU General Public License v3 and if you wanted a chat room like this for your website, you're free to check my project out! At some point I may put a mirror to my code on GitHub to allow social development and pull requests for others if you'd like to help improve the feature set.

Check out the source at https://git.kirsle.net/apps/BareRTC

There are 2 comments on this page. Add yours.

Can it communicate with Joomla? for integration member profile and avatar

@Arielle:

I don't have a Joomla integration specifically, you may need to write some custom PHP code to sign a JWT token to log users into BareRTC.

Here is a JWT library for PHP: https://github.com/firebase/php-jwt

And details for how the JWT token should look to log your user into BareRTC: https://git.kirsle.net/apps/BareRTC/src/branch/master/docs/Authentication.md

0.0104s.